3 min read

When Governance Becomes Pattern-Matching

The EU AI Act is the most ambitious AI regulation in the world. Most organisations will implement it like ISO 9001. That's not a criticism of the...

Hier finden Sie weitere spannende Links und die Möglichkeit mit uns in Kontakt zu treten.

Beginnen Sie mit der schnellen Analyse. Diese Services liefern Ihnen die strategische Standortbestimmung und eine klare To-Do-Liste, um Risiken sofort zu managen.

Übersetzen Sie Regulierung in praktikable Prozesse. Aufbau des Governance-Fundaments, Implementierung klarer Rollen und die dauerhafte Absicherung.

Sichern Sie den Erfolg durch interne Kompetenz. Unsere Trainings befähigen Ihre Teams, Governance direkt in Code und Prozesse umzusetzen.

Dieser Bereich dient als zentrale Quelle für fundierte Analysen und praxisnahe Frameworks. Greifen Sie auf unser dokumentiertes Wissen zu, um regulatorische Komplexität zu durchdringen, strategische Risiken zu managen und Compliance-Anforderungen effizient in Ihre Organisation zu implementieren.

1 min read

Tilman Mürle

:

Aug 25, 2025 9:42:12 AM

Artificial intelligence is becoming part of critical business processes. From decision-making in finance to automation in operations, AI influences outcomes that matter. Yet one question keeps coming up:

This is not just an academic challenge. Lack of explainability has direct consequences:

Compliance: Regulators increasingly require AI decisions to be transparent and accountable.

Trust: Customers and partners expect clarity on how conclusions are reached.

Risk: If you can’t interpret a system’s output, you can’t evaluate whether it’s safe.

"Explainable AI (XAI) is the practice of making AI decisions understandable for humans. Instead of black-box outputs, XAI provides transparency about why a model made a decision, what inputs influenced it, and where its limits are."

At Komplyzen, we embed XAI into your AI governance framework:

Compliance by Design: Evidence that models meet regulatory explainability requirements.

Audit Readiness: Tools and processes to document decision logic.

Human Oversight: Interfaces that make AI decisions interpretable for non-technical stakeholders.

Risk Management: Independent verification signals to test whether outputs remain trustworthy.

AI adoption without explainability is fragile. Enterprises cannot outsource accountability to vendors or rely on certification labels alone. With XAI, you gain:

Confidence that your systems can stand regulatory scrutiny.

Trust from customers and partners.

The ability to correct, improve, and govern AI proactively.

We are your accomplices for smart compliance. Our XAI solutions combine technical explainability with governance structures, ensuring that AI adoption in your organization is not just fast — it’s sustainable, trustworthy, and compliant.

3 min read

The EU AI Act is the most ambitious AI regulation in the world. Most organisations will implement it like ISO 9001. That's not a criticism of the...

6 min read

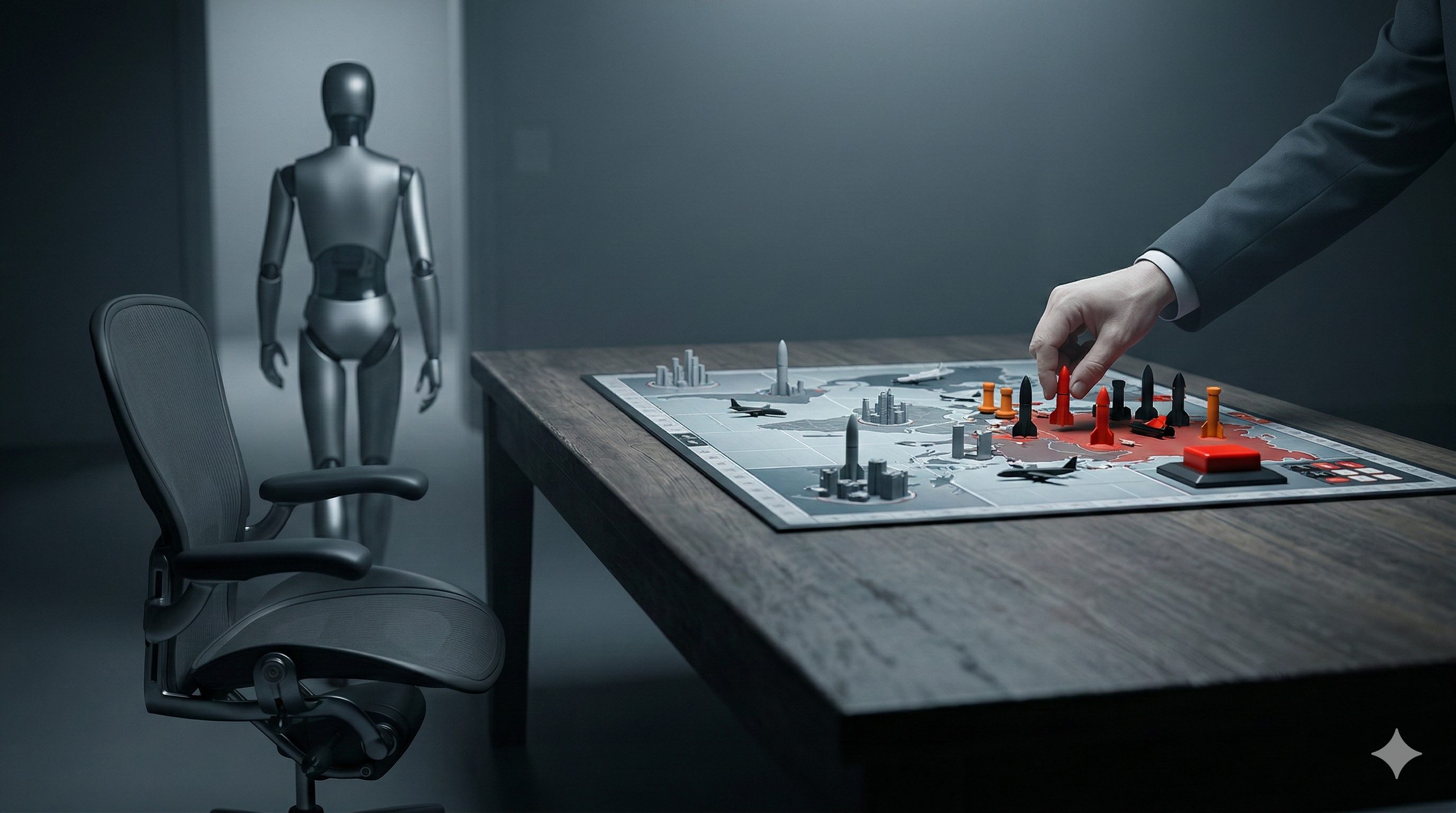

Apparently AI starts nuclear wars now. At least that's what Euronews reported today, citing a preprint from Kenneth Payne, Professor of Strategy at...

4 min read

Anthropic was founded in 2021 by researchers who left OpenAI over concerns that safety was being sacrificed for speed. Their Responsible Scaling...